We recently made some changes to our data governance in my company that required me to change sharing permissions for a significant number of the Accounts in our Dynamics system. I needed to find all Accounts that were shared with a specific Team so I could then amend the permissions the Team had on each record.

As you may be aware, information relating to the Sharing of records in Dataverse/Dynamics 365 is held in the PrincipalObjectAccess table, so we will need to run a query against the POA table to find the records shared with our Team. For more info on the POA Table I’d recommend reading this blog post; it refers to CRM 2011, but it’s still valid information today.

There is no out-of-the-box way to search the POA table with Advanced Find so in order to to run the query you will need to use the FetchXML Builder in the XRMToolBox, created by Jonas Rapp, to create a query. The FetchXML Builder makes running advanced queries like this really easy, so if you’ve not used it before I’d highly recommend you start using it. If you’ve found it helpful then please consider donating a little bit to Jonas to thank him for creating this tool by clicking this link.

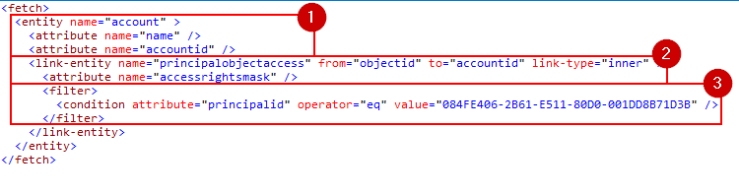

The FetchXML Query I used is:

<fetch>

<entity name="account" >

<attribute name="name" />

<attribute name="accountid" />

<link-entity name="principalobjectaccess" from="objectid" to="accountid" link-type="inner" >

<attribute name="accessrightsmask" />

<filter>

<condition attribute="principalid" operator="eq" value="[YOUR_GUID_HERE]" />

</filter>

</link-entity>

</entity>

</fetch>

To understand this FetchXML it helps to be aware some of the key columns from the PrincipalObjectAccess table

| Column | Purpose |

| principalId | the GUID of the user or team with which the record is shared |

| objectId | the GUID of the record that has been shared |

| accessrightsmask | A number that represents the level of access rights to the record for the team or user with whom it has been shared |

With this information we can break down the FetchXML query as follows:

- Return a list of Accounts with the Attributes of the Account Name and AccounID

- Where there is an entry in the PrincipalObjectAccess table for the Account

- Where the Account has been shared with the named User/Team

I’ve also added the accessrightsmask as an attribute from the principalobjectaccess table to show me what rights the list of Accounts currently have. The returned list of results looks like this:

If you want to understand more about accessrightsmask codes then I’d recommend downloading Scott Sewell’s POA Decoder Ring from the link on this post on the CRM Tip of the Day blog.