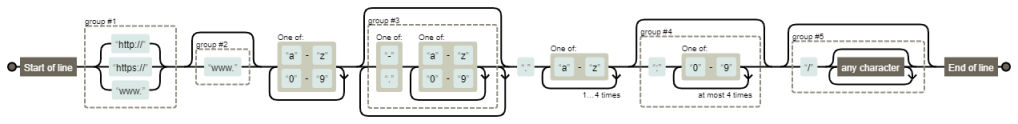

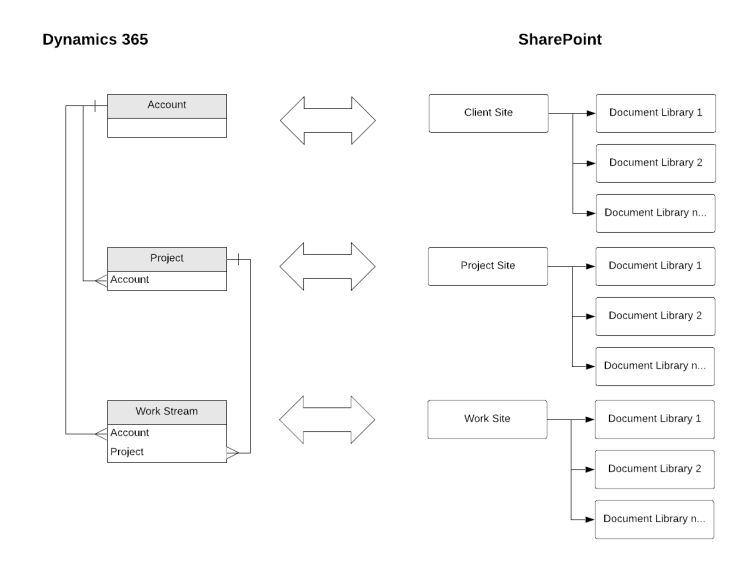

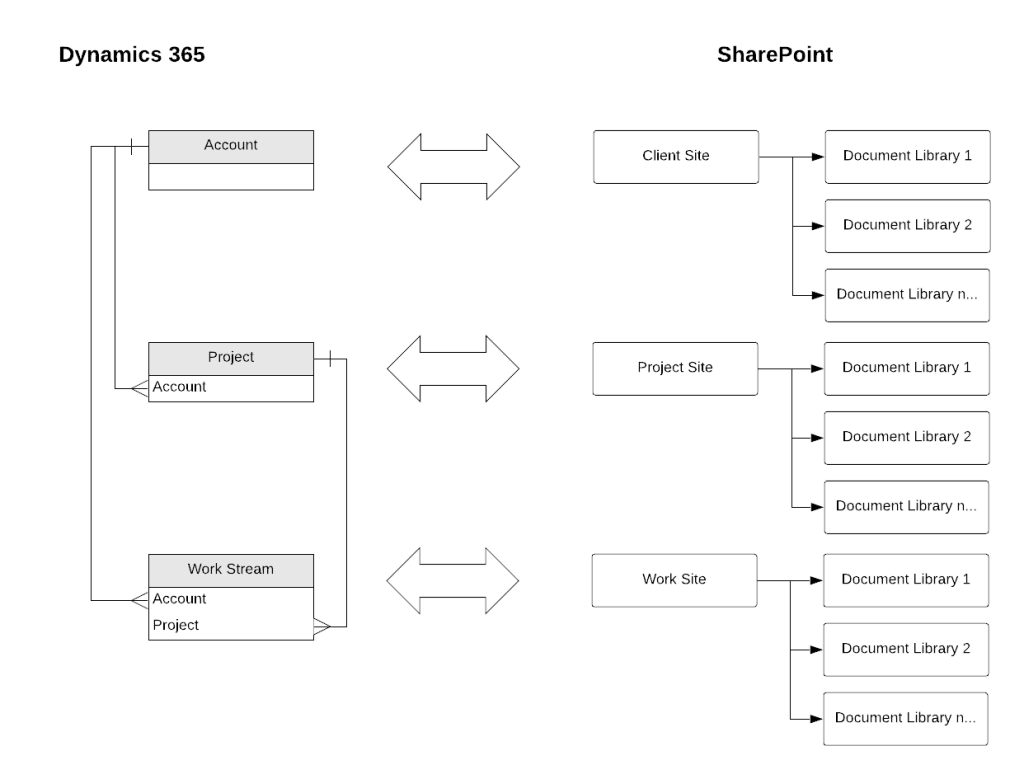

In my previous post I discussed how to improve the integration between Dynamics 365 and SharePoint by creating a site collection for each record, instead of using the OOTB integration.

Now that you have a site, you might give some thought as to how you will structure your data; typically SharePoint best practice would tend to suggest you should use metadata instead of folders, but if you do need to segregate information then the recommendation would be to use Document Libraries.

In this post I will demonstrate how you can add Document Libraries to the SharePoint site for a record and have it visualised on the Dynamics 365 record. There are two approaches for this:

- Triggering the creation of a Document Library from the Dynamics 365 record

- Creating the Document Library directly in SharePoint and syncing with D365

For each method, I have created a Power Automate Flow and I’ll run through the key features below.

Creating A Document Library from Dynamics 365

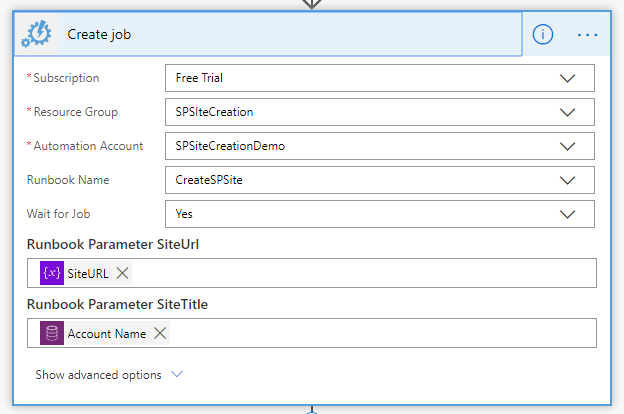

1. When a Record is Selected – the first step is to use the “Common Data Service – When a Record is Selected” trigger. This trigger is only available in the “old” CDS connector (see this great post from Sara Lagerquist for a comparison between the CDS connectors). In order to be able to use the When a Record is Selected trigger you have to ensure the Flow is not in a solution, as it will not be able to select otherwise. One of the best things about using this trigger is that you can also request input values from Users, so in this case we’re going to ask the Users to input their desired Document Library name

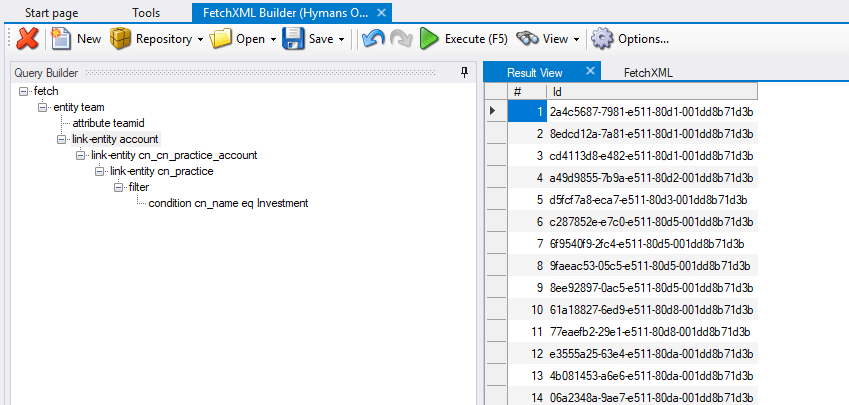

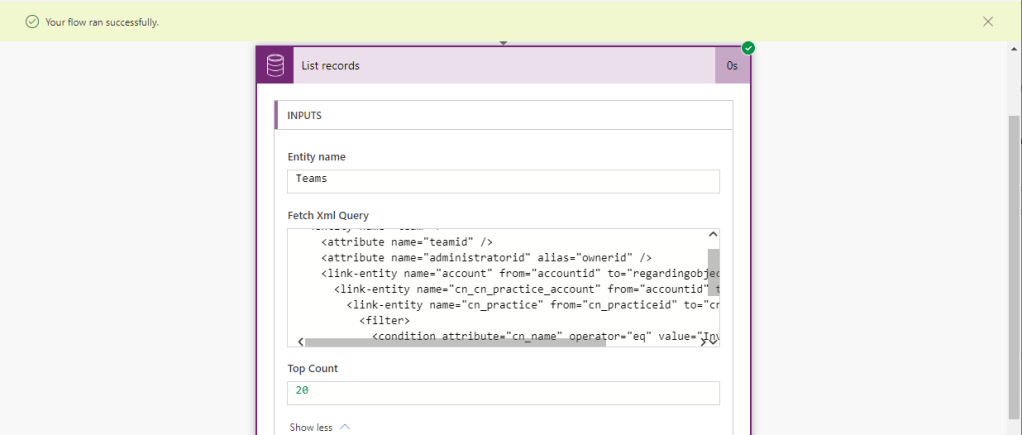

2. Get Related SharePoint Site – next we’ll use a List Records action to get the related SharePoint site. In this step we’ll use an OData filter to look for sites where the Absolute URL matches the SharePoint site URL for our Account

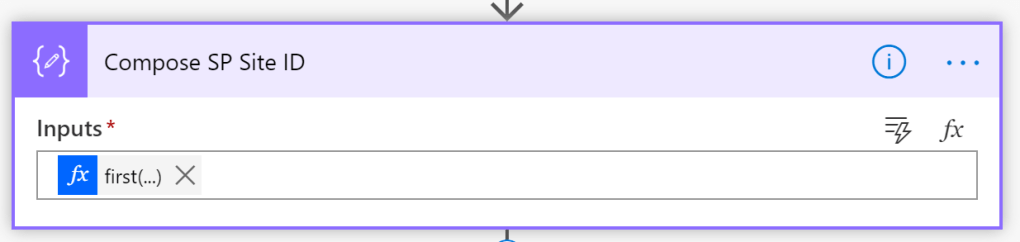

3. Compose SharePoint Site ID – we will use a Compose step to extract the SharePoint site ID from the SharePoint site record we’ve returned in the previous step. As we’ll only have one SharePoint site per record, we don’t need to use an Apply to Each control, rather we can use a First expression to extract the specific field value. The expression I’ve used is:

first(outputs('Get_Related_SP_Site')?['body/value'])?['sharepointsiteid']

4. Create Document Library – we will use the “Send HTTP Request to SharePoint” action to create a Document Library on our Account site. The Send HTTP Request action allows you to leverage the full SharePoint REST API to do actions that aren’t available with the OOTB connector. If you’re not aware, Microsoft have some great resources available to help you understand the REST API, so I’m just using the instructions available here to create a Document Library. (This does say working with Lists, but Document Libraries are really just a fancy kind of list)

There are a few aspects to this action we need to fill in:

- Site Address – we will use the Site Address from the custom field on our Account record

- Method – as we’re creating a Document Library we’re going to be using the POST method

- Headers – we will be specifying that we are sending a JSON request (content-type) and expect a JSON back (accept)

- Body – Microsoft have helpfully outlined the key elements of the body JSON at the link. They key values we need to specify are:

- BaseTemplate: 101 – this signifies that this is a Document Library

- Title – this will be the value that is input in our trigger

The Header JSON is:

{

"Accept": "application/json;odata=verbose",

"Content-Type": "application/json;odata=verbose"

}

The Body JSON is:

{

"__metadata": {

"type": "SP.List"},

"BaseTemplate": 101,

"ContentTypesEnabled": false,

"Description": "Created by Flow",

"Title": "@{triggerBody()['text']}"

}

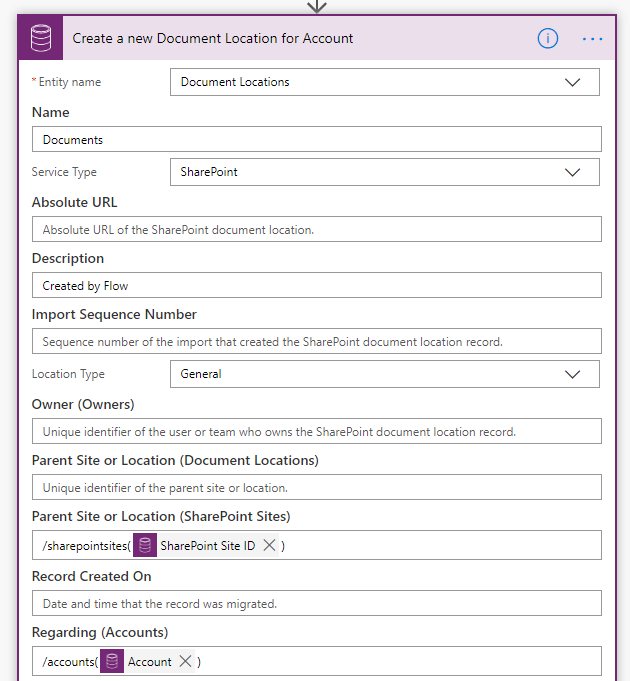

5. Create a Document Location – the final step is to use a Create a Record action to create a Document Location record in Dynamics 365. We need to input the following information:

- Name – we will use the input value from the trigger

- Service Type Value – set this to SharePoint

- Relative URL – we will use the input value from the trigger

- Parent Site or Location ID – we will use the output from the Compose step at Step 3 above

- Parent Site or Location Type – set this to SharePointSites

- Regarding Object ID – we will set this to the Account ID from the trigger

- Regarding Object Type – set this to Accounts

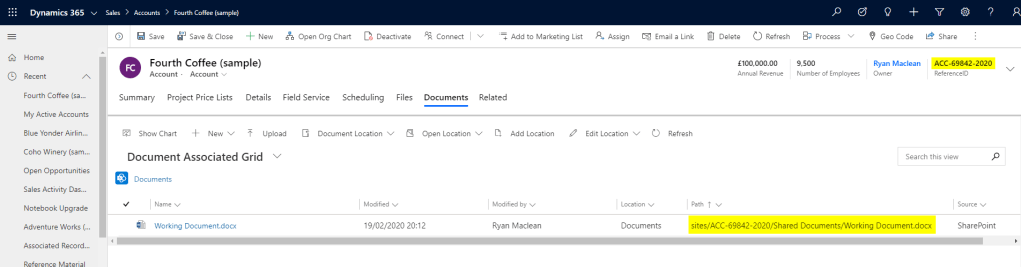

When this is all done, we can use the Flow “on-demand” to create Document Libraries and an associated Dynamics 365 Document Location on any Accounts in our organisation that have a SharePoint site.

Note: I have not added any error handling to this Flow, but you may wish to include some guard conditions to ensure you don’t try and create document libraries against records with no associated SharePoint site

CREATING DOCUMENT LIBRARY FROM SharePoint and Syncing to Dynamics 365

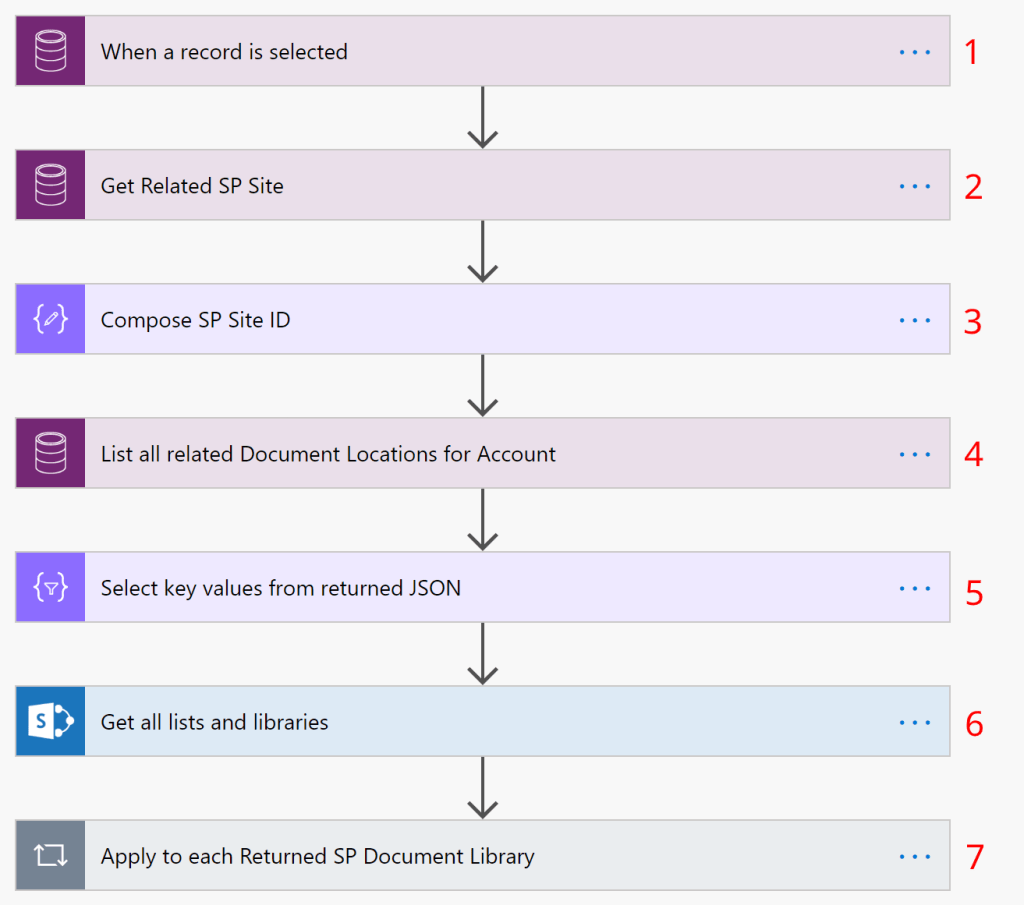

Steps 1 – 3 are the exact same as Steps 1-3 in the previous Flow (i.e. use the When a Record is Selected Trigger (though we don’t need an input value for this Flow), then List Records to get the associated SharePoint Site record, then a Compose step to extract the SharePoint Site ID.

4. List all related Document Locations for Account – next we will use a List Records step to find all Document Locations in Dynamics 365 related to the SharePoint site record for the Account

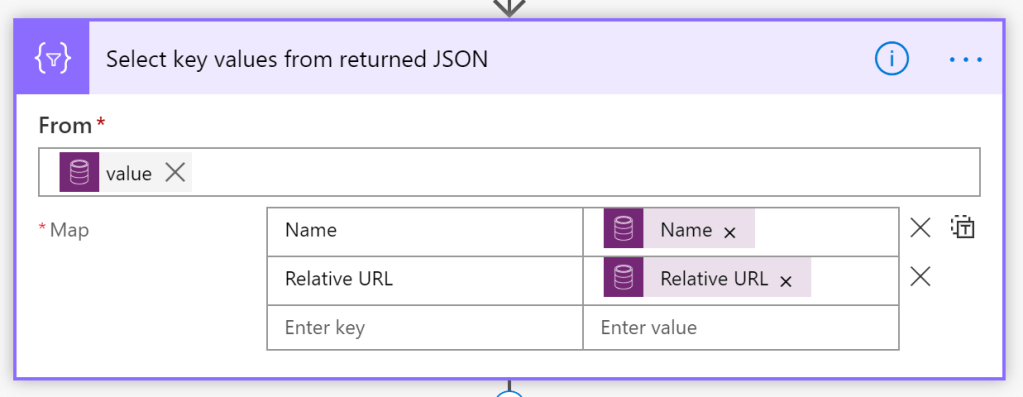

5. Select key values from returned JSON – in order to remove the noisiness from the returned JSON for the List Records step above we will use a Select action to extract the following values for each Document Location:

- Name

- Relative URL

- ID

6. Get all Lists and Libraries – we’ll use the standard Get all Lists and Libraries action to return a list of the Document Libraries on the site. For the Site Address we’ll input the SharePoint Site URL from the custom field on our Account entity

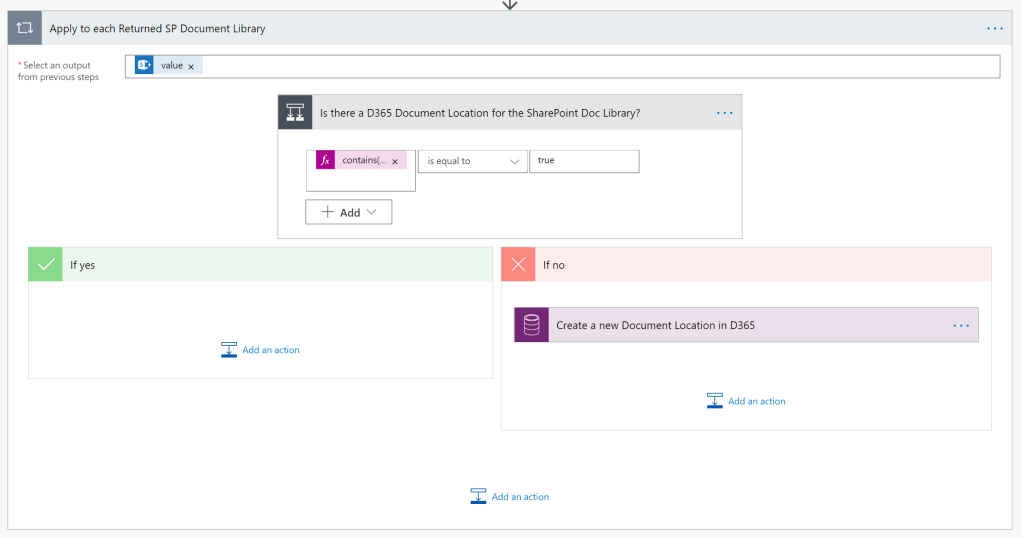

7. Apply to each returned SP Document Library – for each Document Library that is returned in the step above, we’ll loop through them to check if they are already in Dynamics 365 and, if not, we’ll create a Document Location for them

7A – Is there a D365 Document Location for the SharePoint Doc Library? – we’re using a condition control here to check if the SharePoint Document Library appears in the list of D365 Document Libraries with a Contains expression. The expression I’ve used is:

contains(toLower(string(body('Select_key_values_from_returned_JSON'))),toLower(items('Apply_to_each_Returned_SP_Document_Library')?['DisplayName']))

7B – If No then Create a New Document Location in D365 – if there is not a D365 Document Location existing for the SharePoint Document Library then we’ll use a Create a new Record action to create one. We need to input the following information:

- Name – we will use the DisplayName from the returned SharePoint Document Library

- Service Type Value – set this to SharePoint

- Relative URL – we will use the DisplayName from the returned SharePoint Document Library

- Parent Site or Location ID – we will use the output from the Compose step at Step 3 above

- Parent Site or Location Type – set this to SharePointSites

- Regarding Object ID – we will set this to the Account ID from the trigger

- Regarding Object Type – set this to Accounts

Note: if a User inputs any invalid characters in the title of the Document Library on create, or if they change the name of the Document Library after it is created, then this Flow may not execute perfectly. In those situations we’d have to include workarounds with the “Send HTTP Request to SharePoint” action and utilise the REST API

Conclusion

With the two Flows I have outlined above we can enable Users to add Document Libraries to their SharePoint sites from either the Dynamics 365 record or the SharePoint site, and be able to interact with them directly from the Dynamics 365 record. From my own experience, I know that this kind of flexibility is appreciated by Users.